From Designer Experience to Robot Perception: Evaluating Animation-Based Motion Design

Abstract

Background Animation-based approaches for robot motion design have been explored through various tool-focused solutions, including 3D animation software integration and keyframe-based authoring systems. While these approaches have demonstrated improved workflow efficiency when compared to traditional hard-coding methods, research has predominantly focused on tool implementation rather than examining the perception-focused dimensions. Specifically, the relationship between specific usability characteristics of animation-based systems and their effects on both designer experience and viewer perception of the resulting robot motions remains largely unexplored. This gap limits our understanding of how and why animation-based approaches influence the quality and acceptance of robot motion design.

Methods To address this gap, we developed Blender2Motor, an exemplary animation-based system that converts Blender animations into servo motor controls, and conducted a two-part evaluation examining both designer and viewer perspectives. 18 user participants designed robot motions through the system while 24 viewer participants evaluated the completed motions. We assessed users’ experiences through the technology acceptance model (TAM) and usability characteristics surveys, measured viewers’ robot perceptions using the Godspeed Questionnaire Series (GQS), and analyzed correlations between designer-side usability factors and both user acceptance and viewer perception outcomes.

Results Correlation analysis revealed that ‘rapid testing ease’ demonstrated the strongest association with technology acceptance (ρ=0.803, p<0.001) and user satisfaction (ρ=0.798, p<0.001), identifying it as a critical factor in animation-based motion design acceptance. ‘Motion modification convenience’ and ‘keyframe animation intuitiveness’ also showed significant correlations with TAM scores. Notably, usability characteristics differentially influenced perceived usefulness versus perceived ease of use: ‘modification convenience’ correlated more strongly with PEOU (ρ=0.717, p<0.001), while ‘animation intuitiveness’ showed stronger correlation with PU (ρ=0.700, p=0.001). At the item level, users’ ‘motion expression freedom’ and ‘motor-animation consistency’ significantly correlated with viewers’ robot likability and perceived safety. However, no significant correlations emerged between users’ overall TAM scores and viewers’ GQS dimensions, indicating complexity in the relationship between designer experience and viewer perception.

Conclusions This study advances beyond general assertions about improved ease of use and speed by identifying specific usability characteristics that drive designer acceptance and examining their relationship to viewer perception. Our findings reveal that rapid iteration capability and modification convenience are key determinants of designer satisfaction, while consistency between animation preview and physical motor output influences viewer perception. The absence of direct correlation between overall designer acceptance and viewer perception suggests that tool usability and motion quality represent distinct dimensions requiring separate consideration in motion design system development. These insights contribute to understanding how animation-based frameworks can effectively support multidisciplinary robot development teams, where designers and engineers collaborate with different expertise and tool proficiencies. Future research should explore design interventions that simultaneously optimize both designer experience and viewer perception outcomes.

Keywords:

HRI, Design, Animation, HCI, Motion Design, Interaction Design1. Chasm Between Designers and Engineers and Current Limitations in Robot Design

Robot prototyping in multidisciplinary environments faces communication difficulties due to programming barriers and the absence of collaborative tools. Although the emergence of Arduino significantly improved prototyping accessibility, C++-based Arduino remains challenging for non-programmers(Kondaveeti et al., 2021), with differences between designers’ intended motion and engineers’ implementation methods, and structural problems where designers find it difficult to directly test and modify robot operation(Kondaveeti et al., 2021). This stems from communication gaps occurring when team members use different tools and methodologies in the collaborative process and the lack of common foundational knowledge due to unique languages and systems of each specialized field(Nguyen & Mougenot, 2022; Mcharek et al., 2019). Due to the specificity of robot systems as physical implementations, continuous convergent work methods are required rather than simple role division(Dautenhahn, 2007). Attempts at multidisciplinary collaborative robot development have shown effectiveness in changing participants’ perceptions and attitudes but demonstrated limitations in actual performance measurement and transfer to practical robot design environments(Vicente et al., 2018; Lin & Chen, 2023).

Various solutions have been proposed to address these problems, but fundamental limitations still exist. Robot software frameworks like ROS and MoveIt! attempted to reduce complex robot software barriers through immediacy, transparency, and intuitiveness, but still require C++ and Python knowledge, making direct utilization difficult for non-programmer designers(Coleman et al., 2014). Various tools have been developed to improve user accessibility in robot programming, including user-friendly visual interfaces, visualization approaches, robot simulators, and digital twin environments(Wang et al., 2024; Szafir & Szafir, 2021; Farley et al., 2022; Hao et al., 2019; Rivera et al., 2024; Fresnillo et al., 2023). However, these approaches still require ROS-based environments or are focused on industrial robots or specific tasks, maintaining a strong character as tools for robotics experts rather than designers.

While robot control interfaces in industrial settings focus on reducing programming needs from a managerial perspective, allowing non-engineers to operate systems, they still have limitations as tools for designers(Ji et al., 2018). Visual programming has emerged as an alternative, primarily through block programming approaches. However, these approaches face limitations including scalability issues and difficulties in transferring designs to actual robot environments(Alexandrova et al., 2015; Van Waveren et al., 2021). Despite research showing that visual programming reduces cognitive stress compared to text-based programming, end-user development tools for social robot design remain insufficient(Thorgeirsson et al., 2024; Coronado et al., 2020).

2. Animation-Based Approaches for Natural Robot Movement

The biggest limitation of robot motion design through hard-coding is the difficulty in implementing lively and natural movements. Natural robot movement requires smooth curved trajectories rather than linear movements, which is important for both social acceptability and energy efficiency(Guillen et al., 2020). The 2/3 power law in human movement shows that exponential rather than linear movement is essential for natural motion(Lacquaniti, 1983), and solutions like S-curve algorithms still require complex programming knowledge (Nguyen et al., 2008). Expressive robot movement is a crucial element in user interaction, and movement has greater influence than form on animacy perception(Hu et al., 2025; Nakayama & Yamanaka, 2016).

Disney’s The Illusion of Life principles, such as Anticipation, Follow Through, Slow In/Out, Arc, Secondary Action, and Timing, are key techniques for creating liveliness through natural acceleration and deceleration, predictable motion patterns, and secondary movements(Johnston & Thomas, 1981). Researchers have proposed applying these animation principles in HRI to enhance robot expressiveness and user understanding (van Breemen, 2004; Ribeiro & Paiva, 2012), and empirical studies have demonstrated their effectiveness—a systematic review of 27 studies found that animation techniques improve robot interaction quality, enhance understanding of robot intentions, and help people relate to robots(Schulz et al., 2019). Movement-in-mind design shows better results than static design in robot design, and utilizing 3D animation has been proven to enhance robot expressiveness(Hoffman & Ju, 2014). However, these limitations such as programming knowledge requirements make it difficult for designers to intuitively implement and modify lively movements in real-time.

3. Animation Tools for Robot Motion: A Tool-Focused Landscape

Approaches that generate robot motion through 3D animation tools demonstrate that animation-based approaches are necessary for implementing natural robot movements. For instance, recent approaches using Maya with reinforcement learning and diffusion models show promising results(Serifi et al., 2024; Grandia et al., 2025). While these methods demonstrate the potential of animation-based approaches, they focus on training-based generation rather than providing real-time design iteration capabilities that designers need. Attempts to utilize Blender for robot control have also been ongoing. The PEAR framework presented an approach for prototyping expressive animated robots by extending Blender’s features to animate different robot morphologies(Balit et al., 2018). Their system allows animators to use familiar 3D animation concepts and define control rigs for motors, emphasizing the advantage of reusing animation tools that designers are already familiar with. Similarly, The Robot Engine (TRE) proposed using Unity’s game engine tools to bridge the gap between engineers and designers(Bartneck & Ju, 2014), allowing movement in the virtual world to be transposed to physical robot actuators. Other studies have focused on generating natural movements for robotics research using Blender(Bruyninckx, 2004; Buys et al., 2010).

More recently, various frameworks have been developed to control servo motors by extracting keyframes from Blender animations and utilizing position values(kneek-koh, 2024; Hendricks, 2024; Hackaday, 2022; hisprofile, 2024; Singh & Ray, 2024; Andrade et al., 2013).

However, these existing studies have been primarily tool-focused—demonstrating that animation-based systems can be built and that they work better than hard-coding approaches. After designing these frameworks and systems, the conclusions typically state that they enable “easier to use” interfaces or “rapid prototyping” (Balit et al., 2018; Bartneck et al., 2014), without examining what specifically contributes to these improvements or how user experience with these characteristics translates into viewer perception of the resulting robot motions. The following questions remain: What specific usability elements of animation-based tools contribute to user experience? How do these elements influence technology acceptance? And crucially, do these user-side improvements translate into better-perceived robot motions from the viewer’s perspective?

In this study, we shift focus from tool development to perception evaluation, investigating how specific usability characteristics of animation-based systems influence both user acceptance and viewer perception of the resulting robot motions.

4. Research Questions

While previous research has demonstrated that animation-based tools are easier to use than hard-coding, the specific usability factors that contribute to user acceptance and their influence on viewer perception remain unexplored. To address this gap, we examine usability beyond simple ease of use, investigating multiple usability characteristics and their effects on both technology acceptance and robot motion perception(Nielsen, 1994). This study investigates: (1) which usability characteristics most influence technology acceptance, (2) how these characteristics affect viewer perception of robot motions, and (3) the relationship between user acceptance and viewer perception. To address these questions, we designed a custom system based on existing frameworks and conducted a two-part evaluation study.

4. 1. RQ1: How Usability Characteristics Affect Technology Acceptance

The first research question examines how specific usability characteristics of the animation-based system influence users’ technology acceptance, measured through the Technology Acceptance Model (TAM) (Davis, 1989). This allows us to identify which usability characteristics most strongly correlate with Perceived Usefulness and Perceived Ease of Use. The eight usability characteristics examined are: 1) accessibility for non-programmers, 2) freedom of motion expression, 3) ease of multi-motor implementation, 4) utility of Blender preview functionality, 5) intuitiveness of keyframe animation approach, 6) convenience of motion editing and fine-tuning, 7) ease of rapid testing for multiple motion patterns, and 8) consistency between actual motor results and Blender animations.

4. 2. RQ2: How Usability Characteristics Affect Viewer Perception of Robot Motion

The second research question investigates how the system’s usability characteristics relate to viewers’ perceptions of the robot motions created through it. This question addresses whether characteristics that enhance the designer’s experience translate into better-perceived robot behaviors from the viewer’s perspective. We examine how usability characteristics correlate with viewers’ perceptions across five dimensions of the Godspeed Questionnaire Series (GQS): Anthropomorphism, Animacy, Likability, Perceived Intelligence, and Perceived Safety(Bartneck et al., 2009).

4. 3. RQ3: How User Technology Acceptance Affects Viewer Perception

The third research question examines how users’ technology acceptance (measured through TAM scores) relates to viewers’ robot perceptions across the five GQS dimensions. By examining this relationship, we aim to determine whether users’ Perceived Usefulness or Perceived Ease of Use has a greater influence on how viewers perceive the resulting robot motions.

Through these research questions, we aim to understand the relationship between designer experience with animation-based tools and viewer perception of robot motions. Rather than evaluating a specific tool’s effectiveness, we seek to identify which usability characteristics matter most in animation-based robot motion design and how these characteristics influence the perceived quality of robot behaviors. These perception-focused insights can inform the development of future animation-based tools that better support expressive robot motion design in multidisciplinary HRI environments.

5. System Design: Blender2Motor

The system Blender2Motor was developed to investigate the relationship between animation-based workflows and their perceived outcomes. This framework converts Blender animations into servo motor controls, enabling examination of how usability characteristics influence both user acceptance and viewer perception. Since many users participated in our study have not worked with Arduino, we utilized Dynamixel servo motors capable of daisy-chaining and U2D2 hardware. Daisy-chaining is a serial connection topology where multiple motors are connected in sequence through a single communication line, allowing all motors to be controlled through single port. This simplified hardware setup enables designers to focus on motion design rather than complex wiring configurations, providing an environment that can be used immediately without requiring separate hardware knowledge. The system enables designers to create animations in Blender and seamlessly transmit them to motors. The system comprises two main components: (1) a Blender Export module that extracts keyframe animation data and converts it into motor-compatible formats, and (2) a Python-based control module that manages motor playback.

5. 1. System Usage Process

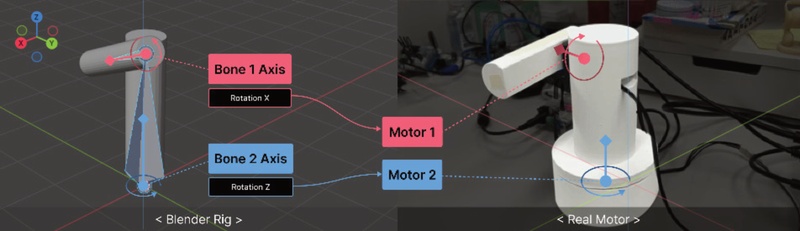

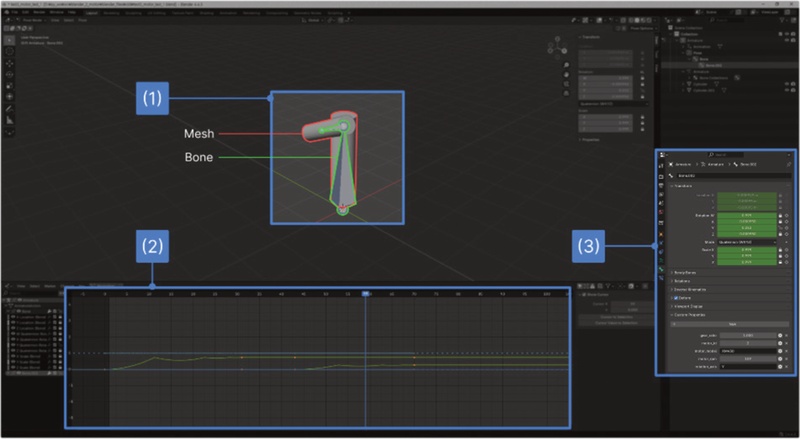

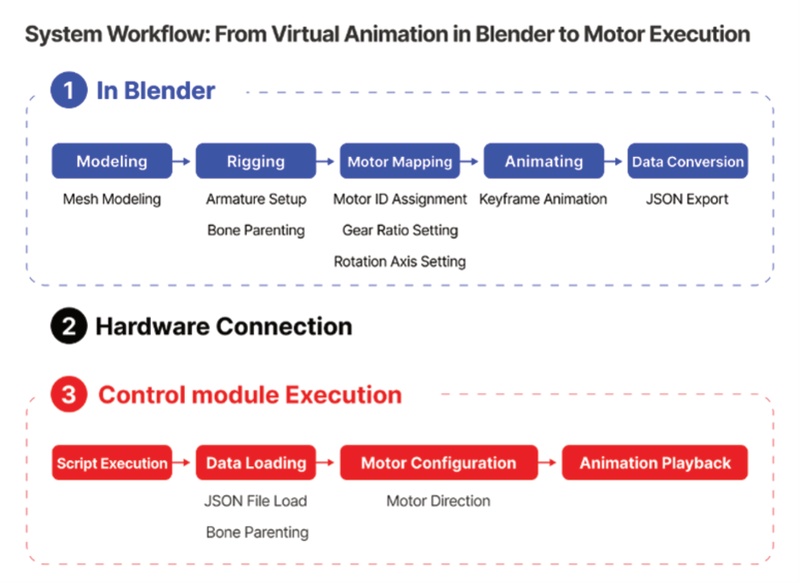

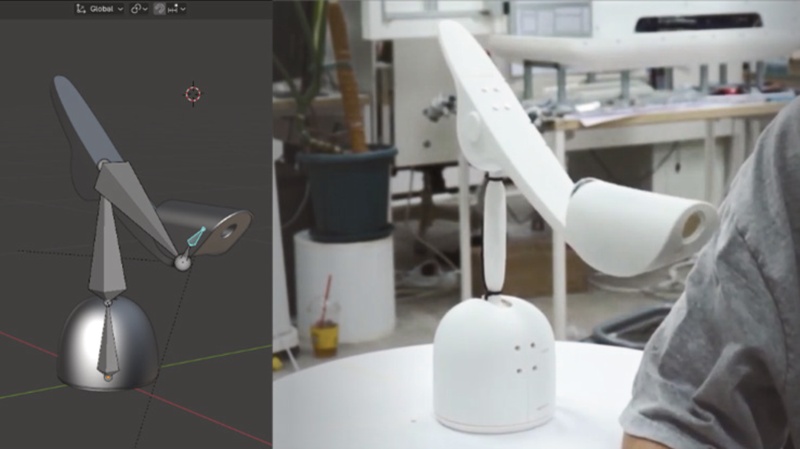

Blender is an open-source 3D modeling tool that allows direct access to animation data through its Python API, enabling programmatic export of keyframe information, bone rotation values, custom properties, and more. Utilizing these capabilities, this study has built a system that converts animations created in Blender into motor control input files [Fig. 2] shows the interface in Blender, where (1) shows the mesh and bones. The mesh is a 3D model representing the robot’s appearance, and bones are elements that define the robot’s skeletal structure. (2) is the Graph Editor, which displays Bézier curves that define interpolation between keyframes during animation creation, allowing adjustment of movement speed and smoothness. (3) is the Custom Properties area, where properties such as motor_id, gear_ratio, and rotation_axis are assigned to each bone to define map it with motors. By utilizing Blender’s animation functions to define joint movements, this system can convert users’ desired motions into motor data and reproduce them with actual motors. The overall user flow of the system is summarized in [Fig. 3].

The export module utilizes Blender’s Python API to traverse the bone hierarchy and extract rotation data from the traversed bones across all keyframes(Conlan, 2017). The rotation values of each bone are extracted per frame and then calculated as motor position values taking into account the gear ratio. The exported animation data is structured and converted into JSON format.

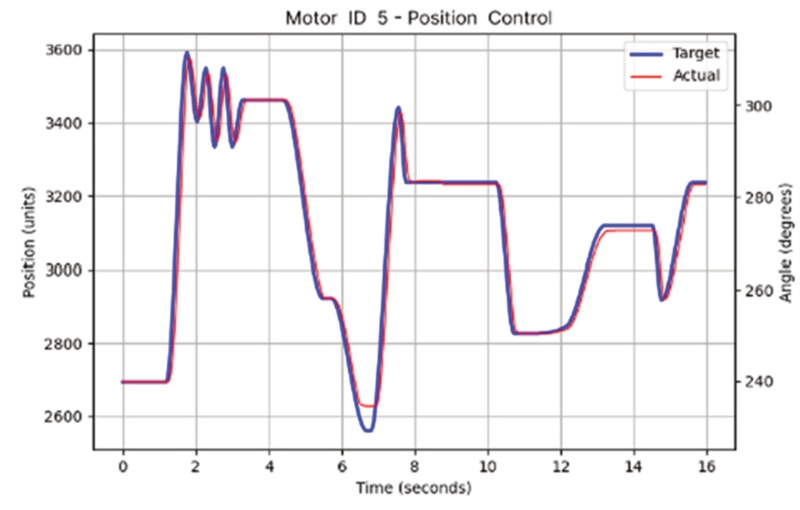

The control module loads the exported JSON file and drives the motors accordingly. Upon completion, the system generates motion result graphs that compare target and actual movements to visualize motion transfer accuracy. [Fig. 4] is an example from our robot’s operation, displaying the motor’s performance with target positions from Blender (blue lines) and actual motor positions (red lines) over time.

[Fig. 5] shows an example of motion designed in Blender and its reproduction on an actual robot.

6. Evaluation Methods

User group evaluation involved 18 student participants organized into multidisciplinary teams of 4-5 members, comprising both engineers and designers. After learning how to use the Blender2Motor system, teams conducted independent robot motion design projects using the provided hardware. Participants designed motions in Blender and implemented them on robots. We surveyed all 18 participants upon completion.

Viewer group evaluation involved 24 participants who did not use the system directly. Participants watched instructional videos about the Blender2Motor system, observed robot operations demonstrating motions created by the user group, and completed surveys. This comparison examined how hands-on usage versus indirect exposure influences system perception.

User group surveys assessed participants’ prior experience with Blender, motor-driven projects, and 3D animation tool other than Blender, along with TAM, usability characteristics, and overall satisfaction. Viewer group surveys evaluated TAM and GQS factors. All items used a 7-point Likert scale.

All participants voluntarily participated in the study with anonymity guaranteed. Participants provided informed consent and could withdraw at any time. The collected data was statistically analyzed to evaluate system usability and user perceptions.

7. Analysis

The user group (N=18) consisted of 11 females and 7 males. The average age was 23.9 years (SD=3.6), ranging from 18 to 35 years. In terms of major distribution, industrial design and product design majors comprised the majority with 13 participants (72.2%), followed by mechanical engineering with 3 participants (16.7%), and visual design and UI/UX design with 1 participant each (5.6%). For the viewer group (N=24), there were 13 females and 11 males. The average age was 24.3 years (SD=4.1), ranging from 21 to 29 years. In terms of major distribution, industrial design and product design majors were the most numerous with 15 participants (62.5%), followed by mechanical engineering with 4 participants (16.7%), visual design with 3 participants (12.5%), and UI/UX design with 2 participants (8.3%).

7. 1. RQ1

We examined the relationship between users’ usability characteristics and TAM through correlation analysis. Given the limited sample size, Spearman correlation analysis was primarily used, with Pearson correlation conducted for visualization purposes(Pearson, 1895; Lee & Nicewander, 1988; Spearman, 1961). The analysis results showed that all usability characteristics had significant positive correlations with the overall TAM score and system satisfaction, although there were differences in correlation strength.

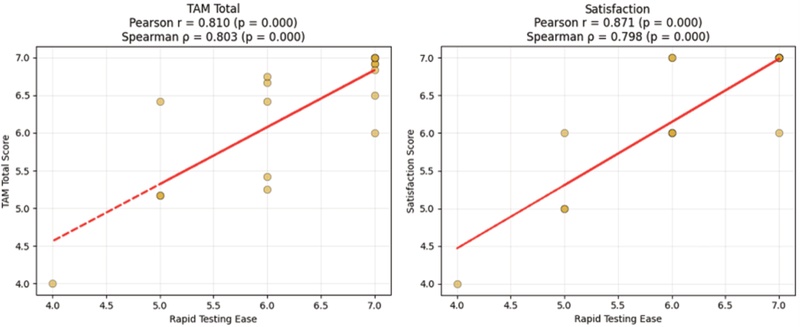

The correlation analysis revealed three key usability characteristics that had the highest correlations with technology acceptance. Rapid Testing Ease emerged as the factor with the greatest association (ρ = 0.803, p < 0.001), showing the highest correlation with both TAM score and user satisfaction (ρ = 0.798, p < 0.001)[Fig. 7]. This suggests that there is a strong association between users’ perception of iterative testing capabilities and their evaluation of the system, indicating that the ability to quickly test and verify results is strongly correlated with acceptance.

Motion Modification Convenience showed the second highest correlation (ρ = 0.716, p < 0.001), demonstrating that post-editing functionality is also highly correlated with workflow acceptance.

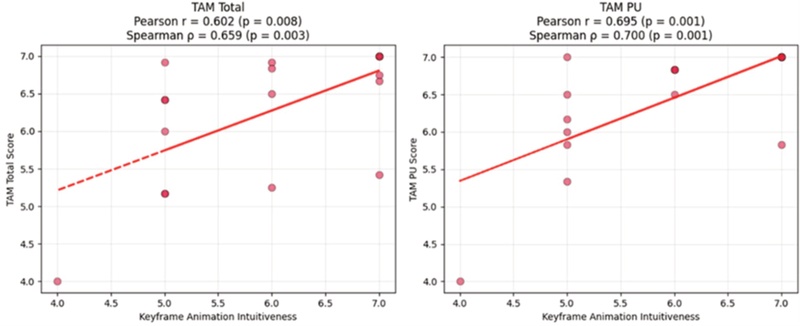

Keyframe Animation Intuitiveness ranked third (ρ = 0.659, p = 0.003), showing high correlation particularly with perceived usefulness (PU) (ρ = 0.700, p = 0.001), indicating that intuitive animation creation methods are substantially associated with system utility perception[Fig. 8].

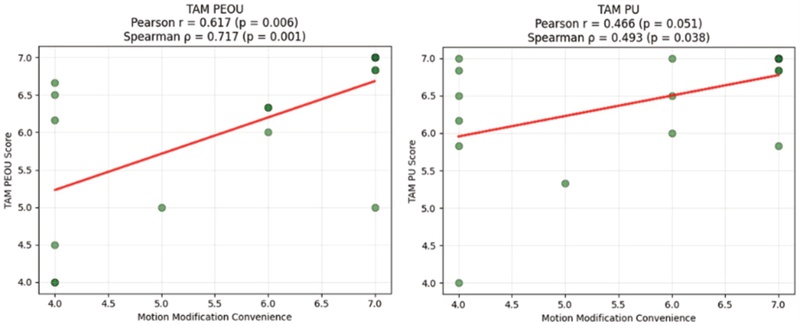

Analysis of TAM sub-components revealed a pattern where usability characteristics differently influenced perceived usefulness (PU) and perceived ease of use (PEOU). Motion Modification Convenience showed higher correlation with PEOU (ρ = 0.717, p < 0.001) than with PU (ρ = 0.493, p = 0.038) [Fig. 9]. This suggests that editing functionality is more strongly associated with reducing perceived complexity than with enhancing functional value perception.

Conversely, Keyframe Animation Intuitiveness showed higher correlation with PU (ρ = 0.700, p = 0.001) than with PEOU (ρ = 0.526, p = 0.025). This indicates that intuitive animation methods are more strongly associated with system utility perception than with operational simplicity. Preview Function Usefulness showed moderate correlation with PEOU (ρ = 0.571, p = 0.013) and a relatively weak correlation with PU (ρ = 0.469, p = 0.050). Additionally, Preview Function Usefulness showed the second highest correlation with Satisfaction scores (ρ = 0.731, p < 0.001).

These analysis results suggest that each usability characteristic shows varying correlation patterns with different dimensions of TAM when evaluating technology acceptance.

7. 2. RQ2

Analysis of the relationship between users’ usability characteristics and viewers’ GQS revealed notable patterns. No statistically significant correlations were found between the five main dimensions of the GQS (Anthropomorphism, Animacy, Likability, Perceived Intelligence, Perceived Safety) and users’ usability characteristics. However, at the detailed item level, a total of 9 significant correlations were found as shown in Tab. 2. This suggests that usability characteristics are selectively associated with specific concrete aspects rather than entire dimensions of robot perception.

Motion Expression Freedom showed significant correlations with viewers’ robot Likability items including ‘Dislike-Like’ (ρ = 0.476, p = 0.046), ‘Unfriendly-Friendly’ (ρ = 0.522, p = 0.026), and ‘Awful-Nice’ (ρ = 0.539, p = 0.021), and also showed positive correlation with the ‘Agitated-Calm’, item of Perceived Safety (ρ = 0.496, p = 0.036). This demonstrates that users’ ability to freely express desired motions through the system is associated with viewers’ perceptions of the robot as more likable and calm.

Motion Modification Convenience showed high correlation with the ‘Anxious-Relaxed’ item related to viewers’ Perceived Safety (ρ = 0.499, p = 0.035), suggesting that users’ ease in modifying and fine-tuning motions is correlated with viewers’ perceptions of the robot as a safer and more comfortable presence.

Motor-Animation Match showed significant correlations with Likability items including ‘Dislike-Like’ (ρ = 0.534, p = 0.023), ‘Unfriendly-Friendly’ (ρ = 0.493, p = 0.038), and ‘Unpleasant-Pleasant’ (ρ = 0.511, p = 0.030). Notably, it also showed a correlation with the ‘Dead-Alive’ item in Animacy (ρ = 0.497, p = 0.036). This suggests that the consistency between virtual animation and actual motor movements is associated with robot animacy perception.

7. 3. RQ3

We conducted an analysis of the relationship between users’ TAM and viewers’ GQS. The results showed no significant correlations between TAM and any of the five dimensions of the GQS [Tab. 3]. Additionally, correlation analysis between TAM sub-components and individual items of the GQS also revealed no significant results meeting the p < 0.05 criterion.

This suggests that how users perceive the system’s usefulness and ease of use shows no significant correlation with how viewers perceive the robot when observing the motion outputs generated through that system.

7. 4. Additional Analyses

Comparative analysis of TAM between the user group that directly used the system and the viewer group that only watched instructional videos revealed significant differences [Fig. 10]. A particularly notable difference was found in PEOU (t = 2.710, p = 0.010, Cohen’s d = 0.839), indicating that the presence or absence of actual usage experience can have a decisive influence on users’ perception of system convenience.

This suggests that learning through instructional videos alone cannot fully convey perceptions of actual operability. In contrast, the relatively small difference in PU indicates that the functional value of the system can be conveyed to some extent through indirect methods. These results reveal the importance of direct experience in acceptance and indicate that system design should incorporate strategies that consider perceptions of both direct users and indirect viewers.

Additionally, the relationship between users’ proficiency in 3D animation tools (excluding Blender) and viewers’ GQS responses was analyzed. The results revealed negative correlations between 3D animation proficiency and ‘Dislike-Like’ (ρ = -0.497, p = 0.036), ‘Unfriendly-Friendly’(ρ = -0.567, p = 0.014) and ‘Anxious-Relaxed’ (ρ = -0.512, p = 0.030).

In contrast, experience with motor-used projects showed a correlation with viewers’ ‘Irresponsible-Responsible’ (ρ = 0.652, p = 0.003). These results suggest that users’ different background experiences are associated with distinct patterns in viewer perceptions of final outputs.

Analysis of 3D animation proficiency by major among the user group showed that industrial design/product design majors demonstrated the highest proficiency (M = 5.077, SD = 0.641). Their primary tool was KeyShot. The negative correlation may stem from users accustomed to KeyShot’s linear movement expression environment focused on product functionality, who tend to create similarly restricted movements when using Blender.

These analysis results demonstrate that users’ background experiences are associated not only with their individual system usage experiences but also with viewers’ perceptions of the system. Particularly for users with product design backgrounds, linear and efficient system operation methods may be perceived by viewers as lacking emotional intimacy, which could serve as a factor to consider in motion design.

7. 5. In-depth Interview

Qualitative analysis revealed key insights regarding system advantages and limitations. Rapid testing emerged as the primary strength, with users describing “fast and convenient motion modification,” “time reduction,” and “intuitive” that satisfies Nielsen’s learnability criteria(Nielsen, 1994) The system also demonstrated high freedom in motion expression, enabling designers to “implement desired motions.” Additionally, the preview function was highly valued for being “intuitively verifiable in Blender,” enhancing user satisfaction in achieving creative goals, which is a critical factor in creative environments(Carroll & Thomas, 1988).

Regarding limitations, users identified persistent adoption barriers including “coding barriers,” the “need to learn Blender,” and “lack of consideration for physical torque.” While the system reduced the learning burden compared to hard coding methods, coding knowledge and Blender proficiency remain prerequisites for effective utilization. Although accessibility has improved, users still require substantial learning to achieve efficient workflow performance. The findings indicate that despite enhanced usability and satisfaction, complete accessibility requires further refinement to eliminate technical barriers.

8. Conclusion

Through this study, we have identified how specific usability characteristics of animation-based workflows affect users beyond what previous research reported as “easier to use” or “faster prototyping,” and crucially, how these characteristics relate to viewer perception of the resulting robot motions. Traditional processes required designers to depend on engineers for physical implementation, with programming methods that limited the design of natural movements(Winkel et al., 2021; Lee et al., 2024). This system enables designers to directly design motion and immediately conduct physical testing, reducing the time from implementation to verification.

Several key findings demonstrate improved designer-engineer communication. Significant positive correlations between Multi Motors Implementation Ease, Motion Modification Convenience, and TAM indicate improved accessibility even without programming knowledge. This accessibility was further evidenced by the fact that 72.2% of design majors successfully implemented multi-motor robot motions.

Blender experience showed no significant correlation with TAM, indicating that the keyframe-based approach is intuitive regardless of software proficiency. This supports the interface design principle that effective tools enable users to focus on core work rather than tool learning (Norman, 1983), thereby eliminating interdisciplinary gaps where software proficiency doesn’t determine performance.

Regarding the relationship between user experience and viewer perception (RQ2, RQ3), our findings reveal a pattern. While users’ overall TAM scores showed no significant correlation with viewers’ robot perception dimensions (RQ3), specific usability characteristics—particularly Motor-Animation Match, Motion Expression Freedom, and Motion Modification Convenience—demonstrated correlations with discrete viewer perception items (RQ2). This suggests that the translation from design process to perceived output operates through specific pathways rather than as a direct transfer. Notably, Motor-Animation Match affects viewers’ overall robot perception, with significant correlations across Likability dimensions demonstrating that virtual-reality correspondence is core to user experience. These correlations indicate that not all aspects of improved authoring experience enhance viewer perception; rather, particular design qualities—especially the fidelity of animation-to-physical translation and the freedom to express motion—serve as critical mediators between creator intention and viewer experience. This finding underscores that while animation-based tools improve designer workflow, their impact on robot perception is mediated through specific design execution qualities rather than general system usability alone.

The previously mentioned Disney’s Illusion of Life principles, which are complex to hard-code, became easily implementable through Blender’s keyframes and graph editor, functioning beyond movement smoothing to endow robots with animacy. The ‘Dead-Alive’ dimension correlation shows that 3D animation directly connects to robot animacy perception, providing a method to enhance animacy using animation tools as a preview for physical movement design. This animation-based approach enables designers to create movements that are not only mechanically smooth but also perceptually alive, contributing to improving how viewers perceive and interact with robots.

Transitioning from ‘position-time’ mathematical to ‘keyframe-graph’ animation approaches reflects a perspective shift from viewing robots as ‘machines to manipulate’ to ‘objects to express’(Konijn & Hoorn, 2020). This establishes methodological foundations for enhancing social and emotional acceptance of contemporary robots. The findings suggest moving away from sequential development to parallel development where designers and engineers make decisions simultaneously(Martin et al., 2023). Furthermore, the system can be particularly effective for research utilizing Wizard of Oz methodology, where designers can rapidly prototype and test robot behaviors without extensive programming.

9. Limitations and Future Works

While this research offers significant contributions across several dimensions, several limitations were identified.

First, a fully code-free environment has not been achieved. The system still requires IDE(Integrated Development Environment) and Python files for execution, with users noting “coding knowledge is still necessary.”

Second, Blender’s learning curve creates new entry barriers. Learning the rigging and animation features requires high concentration and conscious effort, particularly burdening users without 3D graphics experience(Newell & Rosenbloom, 2013). Participants noted “rigging steps remain difficult for Blender novices.” Future research should focus on simplifying initial setup through templates or add-ons.

Third is the insufficient consideration of physical constraints. The system directly converts keyframe animation to motor angles, while ignoring robot weight, inertia, and torque limits. Natural-appearing animations may be impossible or inefficient on actual robots(Hoshyari et al., 2019). Integrating physical motor constraints for design-stage feasibility verification would address this limitation.

Fourth, task complexity affects system efficiency. “Simple motions take longer” suggests the system is most effective for complex expressive movements but creates overhead for simple functional ones(Almaatouq et al., 2021).

Fifth, the study has statistical limitations due to 18 users and 24 viewers—sample sizes sufficient for detecting major effects but limited for small effect sizes or detailed group comparisons. Larger samples are needed for generalizability verification.

Sixth, the use of a single video in the viewer evaluation protocol represents a clear limitation. Since participants received explanations about the proposed system before observing robot behaviors, their interpretations may have been subject to framing bias, leading to more favorable assessments rather than reflecting purely perceptual responses.

Considering both the contributions and limitations of this study, future research should focus on several key areas. These include comprehensive user experience evaluation of robots with motions developed using the proposed framework and Blender2Motor system; investigating emotional and customer-perspective assessments beyond viewer perspectives; and comparative usability studies between robots using animation-based principles and those with traditionally-programmed motions(Chatterjee et al., 2025; Schulz et al., 2019).

Acknowledgments

This work was supported by the Industrial Technology Innovation Program(20018805) funded By the Ministry of Trade, Industry & Energy(MOTIE, Korea).

This work was supported by 2025 Hongik University Innovation Support Program Fund.

Notes

Copyright : This is an Open Access article distributed under the terms of the Creative Commons Attribution Non-Commercial License (http://creativecommons.org/licenses/by-nc/3.0/), which permits unrestricted educational and non-commercial use, provided the original work is properly cited.

References

-

Alexandrova, S., Tatlock, Z., & Cakmak, M. (2015, May). RoboFlow: A flow-based visual programming language for mobile manipulation tasks. In 2015 IEEE international conference on robotics and automation (ICRA) (pp. 5537-5544). IEEE.

[https://doi.org/10.1109/ICRA.2015.7139973]

-

Almaatouq, A., Alsobay, M., Yin, M., & Watts, D. J. (2021). Task complexity moderates group synergy. Proceedings of the National Academy of Sciences, 118(36), e2101062118.

[https://doi.org/10.1073/pnas.2101062118]

- Andrade, A., Cedillo, J. A., Lozada, J. H., & Zarate, I. R. (2013). Robotic arm control with blender. Journal of Emerging Trends in Computing and Information Sciences, 4(4), 382-386.

-

Balit, E., Vaufreydaz, D., & Reignier, P. (2018). PEAR: Prototyping expressive animated robots - A framework for social robot prototyping. In Proceedings of the 13th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP 2018) - Volume 2: HUCAPP (pp. 44-54).

[https://doi.org/10.5220/0006622600440054]

-

Bartneck, C., Kulić, D., Croft, E., & Zoghbi, S. (2009). Measurement instruments for the anthropomorphism, animacy, likeability, perceived intelligence, and perceived safety of robots. International journal of social robotics, 1(1), 71-81.

[https://doi.org/10.1007/s12369-008-0001-3]

-

Bartneck, C., Soucy, M., Fleuret, K., & Sandoval, E. B. (2015). The robot engine - Making the Unity 3D game engine work for HRI. In Proceedings of the 24th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), 431-437. IEEE.

[https://doi.org/10.1109/ROMAN.2015.7333561]

- Bruyninckx, H. (2004). Blender for robotics and robotics for Blender. Dept. of Mechanical Engineering, KU Leuven, Belgium.

-

Buys, K., De Laet, T., Smits, R., & Bruyninckx, H. (2010, November). Blender for robotics: integration into the Leuven paradigm for robot task specification and human motion estimation. In International Conference on Simulation, Modeling, and Programming for Autonomous Robots (pp. 15-25). Berlin, Heidelberg: Springer Berlin Heidelberg.

[https://doi.org/10.1007/978-3-642-17319-6_5]

-

Carroll, J. M., & Thomas, J. C. (1988). Fun. ACM SIGCHI Bulletin, 19(3), 21-24.

[https://doi.org/10.1145/49108.1045604]

-

Chatterjee, T., Guzzo, A., Tlaie, A., Kaddour, A., Papaxanthis, C., Gaveau, J., & Dominey, P. F. (2025). Following the Robot's Lead: Predicting Human and Robot Movement from EEG in a Motor Learning HRI Task. iScience. Advance online publication.

[https://doi.org/10.1016/j.isci.2025.112914]

- Coleman, D., Sucan, I., Chitta, S., & Correll, N. (2014). Reducing the barrier to entry of complex robotic software: a moveit! case study. arXiv preprint arXiv:1404.3785..

-

Conlan, C. (2017). The Blender Python API. Precision 3D Modeling and Add.

[https://doi.org/10.1007/978-1-4842-2802-9]

-

Coronado, E., Mastrogiovanni, F., Indurkhya, B., & Venture, G. (2020). Visual programming environments for end-user development of intelligent and social robots, a systematic review. Journal of Computer Languages, 58, 100970.

[https://doi.org/10.1016/j.cola.2020.100970]

-

Dautenhahn, K. (2007). Socially intelligent robots: dimensions of human-robot interaction. Philosophical transactions of the royal society B: Biological sciences, 362(1480), 679-704.

[https://doi.org/10.1098/rstb.2006.2004]

-

Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS quarterly, 319-340.

[https://doi.org/10.2307/249008]

-

Farley, A., Wang, J., & Marshall, J. A. (2022). How to pick a mobile robot simulator: A quantitative comparison of CoppeliaSim, Gazebo, MORSE and Webots with a focus on accuracy of motion. Simulation Modelling Practice and Theory, 120, 102629.

[https://doi.org/10.1016/j.simpat.2022.102629]

-

Fresnillo, P. M., Vasudevan, S., Mohammed, W. M., Lastra, J. L. M., & Garcia, J. A. P. (2023). Extending the motion planning framework-MoveIt with advanced manipulation functions for industrial applications. Robotics and Computer-Integrated Manufacturing, 83, 102559.

[https://doi.org/10.1016/j.rcim.2023.102559]

-

Guillén Ruiz, S., Calderita, L. V., Hidalgo-Paniagua, A., & Bandera Rubio, J. P. (2020). Measuring smoothness as a factor for efficient and socially accepted robot motion. Sensors, 20(23), 6822.

[https://doi.org/10.3390/s20236822]

- Hackaday. (2022, May 11). Animate your robot in Blender. https://hackaday.com/2022/05/11/animate-your-robot-in-blender/.

-

Hao, P., Lu, T., Cai, Y., & Wang, S. (2019, December). Programming by visual demonstration for pick-and-place tasks using robot skills. In 2019 IEEE International Conference on Robotics and Biomimetics (ROBIO) (pp. 1103-1108). IEEE.

[https://doi.org/10.1109/ROBIO49542.2019.8961481]

- Hendriks, T. (2024). Blender Servo Animation [Computer software]. GitHub. https://github.com/timhendriks93/blender-servo-animation.

- hisprofile. (2024). Universal Remote [Computer software]. GitHub. https://github.com/hisprofile/Universal-Remote.

-

Hoffman, G., & Ju, W. (2014). Designing robots with movement in mind. Journal of Human-Robot Interaction, 3(1), 91-122.

[https://doi.org/10.5898/JHRI.3.1.Hoffman]

-

Hoshyari, S., Xu, H., Knoop, E., Coros, S., & Bächer, M. (2019). Vibration-minimizing motion retargeting for robotic characters. ACM Transactions on Graphics (TOG), 38(4), 1-14.

[https://doi.org/10.1145/3306346.3323034]

- Hu, Y., Huang, P., Sivapurapu, M., & Zhang, J. (2025). ELEGNT: Expressive and Functional Movement Design for Non-anthropomorphic Robot. arXiv preprint arXiv:2501.12493..

-

Ji, W., Wang, Y., Liu, H., & Wang, L. (2018). Interface architecture design for minimum programming in human-robot collaboration. Procedia CIRP, 72, 129-134.

[https://doi.org/10.1016/j.procir.2018.03.013]

- Johnston, O., & Thomas, F. (1981). The illusion of life: Disney animation (p. 576). New York: Disney Editions.

- knee-koh. (2024). MarIOnette [Computer software]. GitHub. https://github.com/knee-koh/MarIOnette.

-

Kondaveeti, H. K., Kumaravelu, N. K., Vanambathina, S. D., Mathe, S. E., & Vappangi, S. (2021). A systematic literature review on prototyping with Arduino: Applications, challenges, advantages, and limitations. Computer Science Review, 40, 100364.

[https://doi.org/10.1016/j.cosrev.2021.100364]

-

Konijn, E. A., & Hoorn, J. F. (2020). Differential facial articulacy in robots and humans elicit different levels of responsiveness, empathy, and projected feelings. Robotics, 9(4), 92.

[https://doi.org/10.3390/robotics9040092]

-

Lacquaniti, F., Terzuolo, C., & Viviani, P. (1983). The law relating the kinematic and figural aspects of drawing movements. Acta psychologica, 54(1-3), 115-130.

[https://doi.org/10.1016/0001-6918(83)90027-6]

-

Lee, J. E., Bylard, A., Sun, R., & Sentis, L. (2024, May). On the performance of jerk-constrained time-optimal trajectory planning for industrial manipulators. In 2024 IEEE International Conference on Robotics and Automation (ICRA) (pp. 9772-9778). IEEE.

[https://doi.org/10.1109/ICRA57147.2024.10610437]

-

Lee Rodgers, J., & Nicewander, W. A. (1988). Thirteen ways to look at the correlation coefficient. The American Statistician, 42(1), 59-66.

[https://doi.org/10.1080/00031305.1988.10475524]

-

Lin, V., & Chen, N. S. (2023). Interdisciplinary training on instructional design using robots and IoT objects: a case study on undergraduates from different disciplines. Computer Applications in Engineering Education, 31(3), 583-601.

[https://doi.org/10.1002/cae.22601]

-

Martin, G., Détienne, F., & Lavigne, E. (2023, June). Negotiation in collaborative assessment of design solutions: an empirical study on a Concurrent Engineering process. In Advances in Concurrent Engineering (pp. 129-135). CRC Press.

[https://doi.org/10.1201/9781003423508-17]

-

Mcharek, M., Azib, T., Larouci, C., & Hammadi, M. (2019, October). Collaboration and multidisciplinary design optimization for mechatronic systems. In IECON 2019-45th Annual Conference of the IEEE Industrial Electronics Society (Vol. 1, pp. 624-629). IEEE.

[https://doi.org/10.1109/IECON.2019.8927685]

-

Nakayama, M., & Yamanaka, S. (2016, October). Perception of animacy by the linear motion of a group of robots. In Proceedings of the Fourth International Conference on Human Agent Interaction (pp. 3-9).

[https://doi.org/10.1145/2974804.2974806]

- Newell, A., & Rosenbloom, P. S. (2013). Mechanisms of skill acquisition and the law of practice. In Cognitive skills and their acquisition (pp. 1-55). Psychology Press.

-

Nguyen, K. D., Ng, T. C., & Chen, I. M. (2008). On algorithms for planning s-curve motion profiles. International Journal of Advanced Robotic Systems, 5(1), 11.

[https://doi.org/10.5772/5652]

-

Nguyen, M., & Mougenot, C. (2022). A systematic review of empirical studies on multidisciplinary design collaboration: Findings, methods, and challenges. Design Studies, 81, 101120.

[https://doi.org/10.1016/j.destud.2022.101120]

-

Nielsen, J. (1994). Usability engineering. Morgan Kaufmann.

[https://doi.org/10.1016/B978-0-08-052029-2.50009-7]

-

Norman, D. A. (1983, December). Design principles for human-computer interfaces. In Proceedings of the SIGCHI conference on Human Factors in Computing Systems (pp. 1-10).

[https://doi.org/10.1145/800045.801571]

-

Pearson, K. (1895). VII. Note on regression and inheritance in the case of two parents. proceedings of the royal society of London, 58(347-352), 240-242.

[https://doi.org/10.1098/rspl.1895.0041]

-

Ribeiro, T., & Paiva, A. (2012). The illusion of robotic life: Principles and practices of animation for robots. In Proceedings of the 7th Annual ACM/IEEE International Conference on Human-Robot Interaction (HRI'12), 383-390.

[https://doi.org/10.1145/2157689.2157814]

-

Rivera-Pinto, A., Kildal, J., & Lazkano, E. (2024). Toward programming a collaborative robot by interacting with its digital twin in a mixed reality environment. International Journal of Human-Computer Interaction, 40(17), 4745-4757.

[https://doi.org/10.1080/10447318.2023.2221599]

- Ruben, G., Espen, K., Michael, A., Georg, W., Jared, B., Steven, P., ... & Moritz, B. (2024). Design and control of a bipedal robotic character. In Robotics: Science and Systems (RSS).

-

Schulz, T., Torresen, J., & Herstad, J. (2019). Animation techniques in human-robot interaction user studies: A systematic literature review. ACM Transactions on Human-Robot Interaction (THRI), 8(2), 1-22.

[https://doi.org/10.1145/3317325]

-

Serifi, A., Grandia, R., Knoop, E., Gross, M., & Bächer, M. (2024, December). Robot motion diffusion model: Motion generation for robotic characters. In SIGGRAPH asia 2024 conference papers (pp. 1-9).

[https://doi.org/10.1145/3680528.3687626]

-

Singh, O., & Ray, A. K. (2024). A physical-virtual based digital twin robotic hand. International Journal on Interactive Design and Manufacturing (IJIDeM), 18(7), 4829-4839.

[https://doi.org/10.1007/s12008-024-01773-7]

-

Spearman, C. (1961). The proof and measurement of association between two things.

[https://doi.org/10.1037/11491-005]

-

Szafir, D., & Szafir, D. A. (2021, March). Connecting human-robot interaction and data visualization. In Proceedings of the 2021 ACM/IEEE International Conference on Human-Robot Interaction (pp. 281-292).

[https://doi.org/10.1145/3434073.3444683]

-

Thorgeirsson, S., Weidmann, T. B., Weidmann, K. H., & Su, Z. (2024, March). Comparing cognitive load among undergraduate students programming in python and the visual language algot. In Proceedings of the 55th ACM Technical Symposium on Computer Science Education (Vol. 1) (pp. 1328-1334).

[https://doi.org/10.1145/3626252.3630808]

-

van Breemen, A. J. N. (2004). Animation engine for believable interactive user-interface robots. In Proceedings of the 2004 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2004, Vol. 3) (pp. 2873-2878). IEEE.

[https://doi.org/10.1109/IROS.2004.1389845]

-

Van Waveren, S., Carter, E. J., Örnberg, O., & Leite, I. (2021). Exploring non-expert robot programming through crowdsourcing. Frontiers in Robotics and AI, 8, 646002.

[https://doi.org/10.3389/frobt.2021.646002]

-

Vicente, A. J. O., Tan, T. A. G., & Yu, A. R. O. (2018). Collaborative approach in software engineering education: An interdisciplinary case. Journal of Information Technology Education. Innovations in Practice, 17, 127-140.

[https://doi.org/10.28945/4062]

-

Wang, Y., Peseckis, A., Jiang, Z., & Gleicher, M. (2024). Motion comparator: Visual comparison of robot motions. IEEE Robotics and Automation Letters.

[https://doi.org/10.1109/LRA.2024.3430649]

-

Winkle, K., Senft, E., & Lemaignan, S. (2021). LEADOR: A method for end-to-end participatory design of autonomous social robots. Frontiers in Robotics and AI, 8, 704111.

[https://doi.org/10.3389/frobt.2021.704119]